Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets

| Lists: | pgsql-hackers |

|---|

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | <pgsql-hackers(at)postgresql(dot)org> |

| Cc: | "Bryce Cutt" <pandasuit(at)gmail(dot)com>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Subject: | Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-10-20 22:42:49 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C1683@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

We propose a patch that improves hybrid hash join's performance for

large multi-batch joins where the probe relation has skew.

Project name: Histojoin

Patch file: histojoin_v1.patch

This patch implements the Histojoin join algorithm as an optional

feature added to the standard Hybrid Hash Join (HHJ). A flag is used to

enable or disable the Histojoin features. When Histojoin is disabled,

HHJ acts as normal. The Histojoin features allow HHJ to use

PostgreSQL's statistics to do skew aware partitioning. The basic idea

is to keep build relation tuples in a small in-memory hash table that

have join values that are frequently occurring in the probe relation.

This improves performance of HHJ when multiple batches are used by 10%

to 50% for skewed data sets. The performance improvements of this patch

can be seen in the paper (pages 25-30) at:

http://people.ok.ubc.ca/rlawrenc/histojoin2.pdf

All generators and materials needed to verify these results can be

provided.

This is a patch against the HEAD of the repository.

This patch does not contain platform specific code. It compiles and has

been tested on our machines in both Windows (MSVC++) and Linux (GCC).

Currently the Histojoin feature is enabled by default and is used

whenever HHJ is used and there are Most Common Value (MCV) statistics

available on the probe side base relation of the join. To disable this

feature simply set the enable_hashjoin_usestatmcvs flag to off in the

database configuration file or at run time with the 'set' command.

One potential improvement not included in the patch is that Most Common

Value (MCV) statistics are only determined when the probe relation is

produced by a scan operator. There is a benefit to using MCVs even when

the probe relation is not a base scan, but we were unable to determine

how to find statistics from a base relation after other operators are

performed.

This patch was created by Bryce Cutt as part of his work on his M.Sc.

thesis.

--

Dr. Ramon Lawrence

Assistant Professor, Department of Computer Science, University of

British Columbia Okanagan

E-mail: ramon(dot)lawrence(at)ubc(dot)ca <mailto:ramon(dot)lawrence(at)ubc(dot)ca>

| Attachment | Content-Type | Size |

|---|---|---|

| histojoin_v1.patch | application/octet-stream | 17.6 KB |

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-01 22:41:48 |

| Message-ID: | e7e0a2570811011541x28612963w1f17dcb6d2fe846a@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Mon, Oct 20, 2008 at 4:42 PM, Lawrence, Ramon <ramon(dot)lawrence(at)ubc(dot)ca> wrote:

> We propose a patch that improves hybrid hash join's performance for large

> multi-batch joins where the probe relation has skew.

>

> Project name: Histojoin

> Patch file: histojoin_v1.patch

>

> This patch implements the Histojoin join algorithm as an optional feature

> added to the standard Hybrid Hash Join (HHJ). A flag is used to enable or

> disable the Histojoin features. When Histojoin is disabled, HHJ acts as

> normal. The Histojoin features allow HHJ to use PostgreSQL's statistics to

> do skew aware partitioning. The basic idea is to keep build relation tuples

> in a small in-memory hash table that have join values that are frequently

> occurring in the probe relation. This improves performance of HHJ when

> multiple batches are used by 10% to 50% for skewed data sets. The

> performance improvements of this patch can be seen in the paper (pages

> 25-30) at:

>

> http://people.ok.ubc.ca/rlawrenc/histojoin2.pdf

>

> All generators and materials needed to verify these results can be provided.

>

> This is a patch against the HEAD of the repository.

>

> This patch does not contain platform specific code. It compiles and has

> been tested on our machines in both Windows (MSVC++) and Linux (GCC).

>

> Currently the Histojoin feature is enabled by default and is used whenever

> HHJ is used and there are Most Common Value (MCV) statistics available on

> the probe side base relation of the join. To disable this feature simply

> set the enable_hashjoin_usestatmcvs flag to off in the database

> configuration file or at run time with the 'set' command.

>

> One potential improvement not included in the patch is that Most Common

> Value (MCV) statistics are only determined when the probe relation is

> produced by a scan operator. There is a benefit to using MCVs even when the

> probe relation is not a base scan, but we were unable to determine how to

> find statistics from a base relation after other operators are performed.

>

> This patch was created by Bryce Cutt as part of his work on his M.Sc.

> thesis.

>

> --

> Dr. Ramon Lawrence

> Assistant Professor, Department of Computer Science, University of British

> Columbia Okanagan

> E-mail: ramon(dot)lawrence(at)ubc(dot)ca

I'm interested in trying to review this patch. Having not done patch

review before, I can't exactly promise grand results, but if you could

provide me with the data to check your results? In the meantime I'll

go read the paper.

- Josh / eggyknap

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

| Cc: | <pgsql-hackers(at)postgresql(dot)org>, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-02 23:48:36 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C16BC@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

Joshua,

Thank you for offering to review the patch.

The easiest way to test would be to generate your own TPC-H data and

load it into a database for testing. I have posted the TPC-H generator

at:

http://people.ok.ubc.ca/rlawrenc/TPCHSkew.zip

The generator can produce skewed data sets. It was produced by

Microsoft Research.

After unzipping, on a Windows machine, you can just run the command:

dbgen -s 1 -z 1

This will produce a TPC-H database of scale 1 GB with a Zipfian skew of

z=1. More information on the generator is in the document README-S.DOC.

Source is provided for the generator, so you should be able to run it on

other operating systems as well.

The schema DDL is at:

http://people.ok.ubc.ca/rlawrenc/tpch_pg_ddl.txt

Note that the load time for 1G data is 1-2 hours and for 10G data is

about 24 hours. I recommend you do not add the foreign keys until after

the data is loaded.

The other alternative is to do a pgdump on our data sets. However, the

download size would be quite large, and it will take a couple of days

for us to get you the data in that form.

--

Dr. Ramon Lawrence

Assistant Professor, Department of Computer Science, University of

British Columbia Okanagan

E-mail: ramon(dot)lawrence(at)ubc(dot)ca

> -----Original Message-----

> From: Joshua Tolley [mailto:eggyknap(at)gmail(dot)com]

> Sent: November 1, 2008 3:42 PM

> To: Lawrence, Ramon

> Cc: pgsql-hackers(at)postgresql(dot)org; Bryce Cutt

> Subject: Re: [HACKERS] Proposed Patch to Improve Performance of Multi-

> Batch Hash Join for Skewed Data Sets

>

> On Mon, Oct 20, 2008 at 4:42 PM, Lawrence, Ramon

<ramon(dot)lawrence(at)ubc(dot)ca>

> wrote:

> > We propose a patch that improves hybrid hash join's performance for

> large

> > multi-batch joins where the probe relation has skew.

> >

> > Project name: Histojoin

> > Patch file: histojoin_v1.patch

> >

> > This patch implements the Histojoin join algorithm as an optional

> feature

> > added to the standard Hybrid Hash Join (HHJ). A flag is used to

enable

> or

> > disable the Histojoin features. When Histojoin is disabled, HHJ

acts as

> > normal. The Histojoin features allow HHJ to use PostgreSQL's

statistics

> to

> > do skew aware partitioning. The basic idea is to keep build

relation

> tuples

> > in a small in-memory hash table that have join values that are

> frequently

> > occurring in the probe relation. This improves performance of HHJ

when

> > multiple batches are used by 10% to 50% for skewed data sets. The

> > performance improvements of this patch can be seen in the paper

(pages

> > 25-30) at:

> >

> > http://people.ok.ubc.ca/rlawrenc/histojoin2.pdf

> >

> > All generators and materials needed to verify these results can be

> provided.

> >

> > This is a patch against the HEAD of the repository.

> >

> > This patch does not contain platform specific code. It compiles and

has

> > been tested on our machines in both Windows (MSVC++) and Linux

(GCC).

> >

> > Currently the Histojoin feature is enabled by default and is used

> whenever

> > HHJ is used and there are Most Common Value (MCV) statistics

available

> on

> > the probe side base relation of the join. To disable this feature

> simply

> > set the enable_hashjoin_usestatmcvs flag to off in the database

> > configuration file or at run time with the 'set' command.

> >

> > One potential improvement not included in the patch is that Most

Common

> > Value (MCV) statistics are only determined when the probe relation

is

> > produced by a scan operator. There is a benefit to using MCVs even

when

> the

> > probe relation is not a base scan, but we were unable to determine

how

> to

> > find statistics from a base relation after other operators are

> performed.

> >

> > This patch was created by Bryce Cutt as part of his work on his

M.Sc.

> > thesis.

> >

> > --

> > Dr. Ramon Lawrence

> > Assistant Professor, Department of Computer Science, University of

> British

> > Columbia Okanagan

> > E-mail: ramon(dot)lawrence(at)ubc(dot)ca

>

> I'm interested in trying to review this patch. Having not done patch

> review before, I can't exactly promise grand results, but if you could

> provide me with the data to check your results? In the meantime I'll

> go read the paper.

>

> - Josh / eggyknap

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-03 00:41:55 |

| Message-ID: | e7e0a2570811021641s560a7c27r6816946e766102f3@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Sun, Nov 2, 2008 at 4:48 PM, Lawrence, Ramon <ramon(dot)lawrence(at)ubc(dot)ca> wrote:

> Joshua,

>

> Thank you for offering to review the patch.

>

> The easiest way to test would be to generate your own TPC-H data and

> load it into a database for testing. I have posted the TPC-H generator

> at:

>

> http://people.ok.ubc.ca/rlawrenc/TPCHSkew.zip

>

> The generator can produce skewed data sets. It was produced by

> Microsoft Research.

>

> After unzipping, on a Windows machine, you can just run the command:

>

> dbgen -s 1 -z 1

>

> This will produce a TPC-H database of scale 1 GB with a Zipfian skew of

> z=1. More information on the generator is in the document README-S.DOC.

> Source is provided for the generator, so you should be able to run it on

> other operating systems as well.

>

> The schema DDL is at:

>

> http://people.ok.ubc.ca/rlawrenc/tpch_pg_ddl.txt

>

> Note that the load time for 1G data is 1-2 hours and for 10G data is

> about 24 hours. I recommend you do not add the foreign keys until after

> the data is loaded.

>

> The other alternative is to do a pgdump on our data sets. However, the

> download size would be quite large, and it will take a couple of days

> for us to get you the data in that form.

>

> --

> Dr. Ramon Lawrence

> Assistant Professor, Department of Computer Science, University of

> British Columbia Okanagan

> E-mail: ramon(dot)lawrence(at)ubc(dot)ca

I'll try out the TPC-H generator first :) Thanks.

- Josh

| From: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | "Joshua Tolley" <eggyknap(at)gmail(dot)com>, pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-03 01:36:24 |

| Message-ID: | 4873.1225676184@sss.pgh.pa.us |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

"Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> writes:

> The easiest way to test would be to generate your own TPC-H data and

> load it into a database for testing. I have posted the TPC-H generator

> at:

> http://people.ok.ubc.ca/rlawrenc/TPCHSkew.zip

> The generator can produce skewed data sets. It was produced by

> Microsoft Research.

What alternatives are there for people who do not run Windows?

regards, tom lane

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

| Cc: | "Joshua Tolley" <eggyknap(at)gmail(dot)com>, <pgsql-hackers(at)postgresql(dot)org>, "Bryce Cutt" <pandasuit(at)gmail(dot)com>, "Michael Henderson" <mikecubed(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-03 02:34:20 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C16C1@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

> From: Tom Lane [mailto:tgl(at)sss(dot)pgh(dot)pa(dot)us]

> What alternatives are there for people who do not run Windows?

>

> regards, tom lane

The TPC-H generator is a standard code base provided at

http://www.tpc.org/tpch/. We have been able to compile this code on

Linux.

However, we were unable to get the Microsoft modifications to this code

to compile on Linux (although they are supposed to be portable). So, we

just used the Windows version with wine on our test Debian machine.

I have also posted the text files for the TPC-H 1G 1Z data set at:

http://people.ok.ubc.ca/rlawrenc/tpch1g1z.zip

Note that you need to trim the extra characters at the end of the lines

for PostgreSQL to read them properly.

Since the data takes a while to generate and load, we can also provide a

compressed version of the PostgreSQL data directory of the databases

with the data already loaded.

--

Ramon Lawrence

| From: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | pgsql-hackers(at)postgresql(dot)org, Bryce Cutt <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-05 13:19:30 |

| Message-ID: | 20081105131930.GC18367@polonium.part.net |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Mon, Oct 20, 2008 at 03:42:49PM -0700, Lawrence, Ramon wrote:

> We propose a patch that improves hybrid hash join's performance for large

> multi-batch joins where the probe relation has skew.

I'm running into problems with this patch. It applies cleanly, and the

technique you provided for generating sample data works just fine

(though I admit I haven't verified that the expected skew exists in the

data). But the server crashes when I try to load the data. The backtrace

is below, labeled "Backtrace 1"; since it happens in

ExecScanHashMostCommonTuples, I figure it's because of the patch and not

something else odd (unless perhaps my hardware is flakey -- I'll try it

on other hardware as soon as I can, to verify). Note that I'm running

this on Ubuntu 8.10, 32-bit x86, running a kernel Ubuntu labels as

"2.6.27-7-generic #1 SMP". The statement in execution at the time was

"ALTER TABLE SUPPLIER ADD CONSTRAINT SUPPLIER_FK1 FOREIGN KEY

(S_NATIONKEY) references NATION (N_NATIONKEY);"

Further, when I go back into the database in psql, simply issuing a "\d"

command crashes the backend with a similar backtrace, labeled Backtrace

2, below. The query underlying \d and its EXPLAIN output are also

included, just for kicks.

- Josh

*****************************************

BACKTRACE 1

****************************************

Core was generated by `postgres: jtolley jtolley [local] ALTE'.

Program terminated with signal 6, Aborted.

[New process 20407]

#0 0xb80b0430 in __kernel_vsyscall ()

(gdb) bt

#0 0xb80b0430 in __kernel_vsyscall ()

#1 0xb7f22880 in raise () from /lib/tls/i686/cmov/libc.so.6

#2 0xb7f24248 in abort () from /lib/tls/i686/cmov/libc.so.6

#3 0x0831540e in ExceptionalCondition (

conditionName=0x8433274

"!(hjstate->hj_OuterTupleMostCommonValuePartition <

hashtable->nMostCommonTuplePartitions)",

errorType=0x834b66d "FailedAssertion", fileName=0x84331d9

"nodeHash.c", lineNumber=880) at assert.c:57

#4 0x081b457b in ExecScanHashMostCommonTuples (hjstate=0x8720a6c,

econtext=0x8720af8) at nodeHash.c:880

#5 0x081b60de in ExecHashJoin (node=0x8720a6c) at nodeHashjoin.c:357

#6 0x081a4748 in ExecProcNode (node=0x8720a6c) at execProcnode.c:406

#7 0x081a242b in standard_ExecutorRun (queryDesc=0x870957c,

direction=ForwardScanDirection, count=1) at execMain.c:1343

#8 0x081c2036 in _SPI_execute_plan (plan=0x87181bc, paramLI=0x0,

snapshot=0x8485300, crosscheck_snapshot=0x0, read_only=1 '\001',

fire_triggers=0 '\0', tcount=1) at spi.c:1976

#9 0x081c2350 in SPI_execute_snapshot (plan=0x87181bc, Values=0x0,

Nulls=0x0, snapshot=0x8485300, crosscheck_snapshot=0x0,

read_only=<value optimized out>, fire_triggers=<value optimized

out>, tcount=1) at spi.c:408

#10 0x082e1921 in RI_Initial_Check (trigger=0xbfeb0afc,

fk_rel=0xb5a21938, pk_rel=0xb5a20754) at ri_triggers.c:2763

#11 0x08178613 in ATRewriteTables (wqueue=0xbfeb0d88) at

tablecmds.c:5026

#12 0x0817ef36 in ATController (rel=0xb5a21938, cmds=<value optimized

out>, recurse=<value optimized out>) at tablecmds.c:2294

#13 0x08261dd5 in ProcessUtility (parsetree=0x86ca17c,

queryString=0x86c96ec "ALTER TABLE SUPPLIER\nADD CONSTRAINT

SUPPLIER_FK1 FOREIGN KEY (S_NATIONKEY) references NATION

(N_NATIONKEY);",

params=0x0, isTopLevel=1 '\001', dest=0x86ca2b4,

completionTag=0xbfeb0fc8 "") at utility.c:569

#14 0x0825e2ae in PortalRunUtility (portal=0x86fadfc,

utilityStmt=0x86ca17c, isTopLevel=<value optimized out>, dest=0x86ca2b4,

completionTag=0xbfeb0fc8 "") at pquery.c:1176

#15 0x0825f2c0 in PortalRunMulti (portal=0x86fadfc, isTopLevel=<value

optimized out>, dest=0x86ca2b4, altdest=0x86ca2b4,

completionTag=0xbfeb0fc8 "") at pquery.c:1281

#16 0x0825fb54 in PortalRun (portal=0x86fadfc, count=2147483647,

isTopLevel=6 '\006', dest=0x86ca2b4, altdest=0x86ca2b4,

completionTag=0xbfeb0fc8 "") at pquery.c:812

#17 0x0825a757 in exec_simple_query (

query_string=0x86c96ec "ALTER TABLE SUPPLIER\nADD CONSTRAINT

SUPPLIER_FK1 FOREIGN KEY (S_NATIONKEY) references NATION

(N_NATIONKEY);")

at postgres.c:992

#18 0x0825bfff in PostgresMain (argc=4, argv=0x8667b08,

username=0x8667ae0 "jtolley") at postgres.c:3569

#19 0x082261cf in ServerLoop () at postmaster.c:3258

#20 0x08227190 in PostmasterMain (argc=1, argv=0x8664250) at

postmaster.c:1031

#21 0x081cc126 in main (argc=1, argv=0x8664250) at main.c:188

(gdb)

*****************************************

BACKTRACE 2

****************************************

Core was generated by `postgres: jtolley jtolley [local] SELE'.

Program terminated with signal 6, Aborted.

[New process 20967]

#0 0xb80b0430 in __kernel_vsyscall ()

(gdb) bt

#0 0xb80b0430 in __kernel_vsyscall ()

#1 0xb7f22880 in raise () from /lib/tls/i686/cmov/libc.so.6

#2 0xb7f24248 in abort () from /lib/tls/i686/cmov/libc.so.6

#3 0x0831540e in ExceptionalCondition (

conditionName=0x8433274

"!(hjstate->hj_OuterTupleMostCommonValuePartition <

hashtable->nMostCommonTuplePartitions)",

errorType=0x834b66d "FailedAssertion", fileName=0x84331d9

"nodeHash.c", lineNumber=880) at assert.c:57

#4 0x081b457b in ExecScanHashMostCommonTuples (hjstate=0x86fb320,

econtext=0x86fb3ac) at nodeHash.c:880

#5 0x081b60de in ExecHashJoin (node=0x86fb320) at nodeHashjoin.c:357

#6 0x081a4748 in ExecProcNode (node=0x86fb320) at execProcnode.c:406

#7 0x081bb2a1 in ExecSort (node=0x86fb294) at nodeSort.c:102

#8 0x081a4718 in ExecProcNode (node=0x86fb294) at execProcnode.c:417

#9 0x081a242b in standard_ExecutorRun (queryDesc=0x8706e1c,

direction=ForwardScanDirection, count=0) at execMain.c:1343

#10 0x0825e64c in PortalRunSelect (portal=0x8700e0c, forward=1 '\001',

count=0, dest=0x871db14) at pquery.c:942

#11 0x0825f9ae in PortalRun (portal=0x8700e0c, count=2147483647,

isTopLevel=1 '\001', dest=0x871db14, altdest=0x871db14,

completionTag=0xbfeb0fc8 "") at pquery.c:796

#12 0x0825a757 in exec_simple_query (

query_string=0x86cb6f4 "SELECT n.nspname as \"Schema\",\n c.relname

as \"Name\",\n CASE c.relkind WHEN 'r' THEN 'table' WHEN 'v' THEN

'view' WHEN 'i' THEN 'index' WHEN 'S' THEN 'sequence' WHEN 's' THEN

'special' END as \"Type\",\n "...) at postgres.c:992

#13 0x0825bfff in PostgresMain (argc=4, argv=0x8667f58,

username=0x8667f30 "jtolley") at postgres.c:3569

#14 0x082261cf in ServerLoop () at postmaster.c:3258

#15 0x08227190 in PostmasterMain (argc=1, argv=0x8664250) at

postmaster.c:1031

#16 0x081cc126 in main (argc=1, argv=0x8664250) at main.c:188

*****************************************

\d EXPLAIN output

****************************************

jtolley=# explain SELECT n.nspname as "Schema",

jtolley-# c.relname as "Name",

jtolley-# CASE c.relkind WHEN 'r' THEN 'table' WHEN 'v' THEN 'view'

WHEN 'i' THEN 'index' WHEN 'S' THEN 'sequence' WHEN 's' THEN 'special'

END as "Type",

jtolley-# pg_catalog.pg_get_userbyid(c.relowner) as "Owner"

jtolley-# FROM pg_catalog.pg_class c

jtolley-# LEFT JOIN pg_catalog.pg_namespace n ON n.oid =

c.relnamespace

jtolley-# WHERE c.relkind IN ('r','v','S','')

jtolley-# AND n.nspname <> 'pg_catalog'

jtolley-# AND n.nspname !~ '^pg_toast'

jtolley-# AND pg_catalog.pg_table_is_visible(c.oid)

jtolley-# ORDER BY 1,2;

QUERY PLAN

--------------------------------------------------------------------------------------------------

Sort (cost=13.02..13.10 rows=35 width=133)

Sort Key: n.nspname, c.relname

-> Hash Join (cost=1.14..12.12 rows=35 width=133)

Hash Cond: (c.relnamespace = n.oid)

-> Seq Scan on pg_class c (cost=0.00..9.97 rows=35 width=73)

Filter: (pg_table_is_visible(oid) AND (relkind = ANY

('{r,v,S,""}'::"char"[])))

-> Hash (cost=1.09..1.09 rows=4 width=68)

-> Seq Scan on pg_namespace n (cost=0.00..1.09 rows=4

width=68)

Filter: ((nspname <> 'pg_catalog'::name) AND

(nspname !~ '^pg_toast'::text))

(9 rows)

| From: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | pgsql-hackers(at)postgresql(dot)org, Bryce Cutt <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-05 14:48:50 |

| Message-ID: | 20081105144850.GA22181@polonium.part.net |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Mon, Oct 20, 2008 at 03:42:49PM -0700, Lawrence, Ramon wrote:

> We propose a patch that improves hybrid hash join's performance for large

> multi-batch joins where the probe relation has skew.

I also recommend modifying docs/src/sgml/config.sgml to include the

enable_hashjoin_usestatmcvs option.

- Josh / eggyknap

| From: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

|---|---|

| To: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

| Cc: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org, Bryce Cutt <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-05 15:20:41 |

| Message-ID: | 14295.1225898441@sss.pgh.pa.us |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

Joshua Tolley <eggyknap(at)gmail(dot)com> writes:

> On Mon, Oct 20, 2008 at 03:42:49PM -0700, Lawrence, Ramon wrote:

>> We propose a patch that improves hybrid hash join's performance for large

>> multi-batch joins where the probe relation has skew.

> I also recommend modifying docs/src/sgml/config.sgml to include the

> enable_hashjoin_usestatmcvs option.

If the patch is actually a win, why would we bother with such a GUC

at all?

regards, tom lane

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

| Cc: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-05 15:22:42 |

| Message-ID: | e7e0a2570811050722p5655f163v1dd492bd259c2ec@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

-----BEGIN PGP SIGNED MESSAGE-----

Hash: SHA1

On Wed, Nov 5, 2008 at 8:20 AM, Tom Lane wrote:

> Joshua Tolley writes:

>> On Mon, Oct 20, 2008 at 03:42:49PM -0700, Lawrence, Ramon wrote:

>>> We propose a patch that improves hybrid hash join's performance for large

>>> multi-batch joins where the probe relation has skew.

>

>> I also recommend modifying docs/src/sgml/config.sgml to include the

>> enable_hashjoin_usestatmcvs option.

>

> If the patch is actually a win, why would we bother with such a GUC

> at all?

>

> regards, tom lane

Good point. Leaving it in place for patch review purposes is useful,

but we can probably lose it in the end.

- - Josh / eggyknap

-----BEGIN PGP SIGNATURE-----

Version: GnuPG v1.4.9 (GNU/Linux)

Comment: http://getfiregpg.org

iEYEARECAAYFAkkRujsACgkQRiRfCGf1UMNSTACfbpDSQn0HGSVr3jI30GJApcRD

YbQAn2VZdI/aIalGBrbn1hlRWPEvbgV5

=LKZ3

-----END PGP SIGNATURE-----

| From: | "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

|---|---|

| To: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

| Cc: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-06 00:06:11 |

| Message-ID: | 1924d1180811051606w19aaf30du589e8ea10ea5534d@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

The error is causes by me Asserting against the wrong variable. I

never noticed this as I apparently did not have assertions turned on

on my development machine. That is fixed now and with the new patch

version I have attached all assertions are passing with your query and

my test queries. I added another assertion to that section of the

code so that it is a bit more vigorous in confirming the hash table

partition is correct. It does not change the operation of the code.

There are two partition counts. One holds the maximum number of

buckets in the hash table and the other counts the number of actual

buckets created for hash values. I was incorrectly testing against

the second one because that was valid before I started using a hash

table to store the buckets.

The enable_hashjoin_usestatmcvs flag was valuable for my own research

and tests and likely useful for your review but Tom is correct that it

can be removed in the final version.

- Bryce Cutt

On Wed, Nov 5, 2008 at 7:22 AM, Joshua Tolley <eggyknap(at)gmail(dot)com> wrote:

> -----BEGIN PGP SIGNED MESSAGE-----

> Hash: SHA1

>

> On Wed, Nov 5, 2008 at 8:20 AM, Tom Lane wrote:

>> Joshua Tolley writes:

>>> On Mon, Oct 20, 2008 at 03:42:49PM -0700, Lawrence, Ramon wrote:

>>>> We propose a patch that improves hybrid hash join's performance for large

>>>> multi-batch joins where the probe relation has skew.

>>

>>> I also recommend modifying docs/src/sgml/config.sgml to include the

>>> enable_hashjoin_usestatmcvs option.

>>

>> If the patch is actually a win, why would we bother with such a GUC

>> at all?

>>

>> regards, tom lane

>

> Good point. Leaving it in place for patch review purposes is useful,

> but we can probably lose it in the end.

>

> - - Josh / eggyknap

> -----BEGIN PGP SIGNATURE-----

> Version: GnuPG v1.4.9 (GNU/Linux)

> Comment: http://getfiregpg.org

>

> iEYEARECAAYFAkkRujsACgkQRiRfCGf1UMNSTACfbpDSQn0HGSVr3jI30GJApcRD

> YbQAn2VZdI/aIalGBrbn1hlRWPEvbgV5

> =LKZ3

> -----END PGP SIGNATURE-----

>

| Attachment | Content-Type | Size |

|---|---|---|

| histojoin_v2.patch | application/octet-stream | 17.7 KB |

| From: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | Bryce Cutt <pandasuit(at)gmail(dot)com> |

| Cc: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-06 03:11:19 |

| Message-ID: | 20081106031119.GA2007@polonium.part.net |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Wed, Nov 05, 2008 at 04:06:11PM -0800, Bryce Cutt wrote:

> The error is causes by me Asserting against the wrong variable. I

> never noticed this as I apparently did not have assertions turned on

> on my development machine. That is fixed now and with the new patch

> version I have attached all assertions are passing with your query and

> my test queries. I added another assertion to that section of the

> code so that it is a bit more vigorous in confirming the hash table

> partition is correct. It does not change the operation of the code.

>

> There are two partition counts. One holds the maximum number of

> buckets in the hash table and the other counts the number of actual

> buckets created for hash values. I was incorrectly testing against

> the second one because that was valid before I started using a hash

> table to store the buckets.

>

> The enable_hashjoin_usestatmcvs flag was valuable for my own research

> and tests and likely useful for your review but Tom is correct that it

> can be removed in the final version.

>

> - Bryce Cutt

Thanks for the new patch; I'll take a look as soon as I can (prolly

tomorrow).

- Josh

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Cc: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-06 22:33:09 |

| Message-ID: | e7e0a2570811061433p34733d1fs2f94f2c508b84e5b@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Wed, Nov 5, 2008 at 5:06 PM, Bryce Cutt <pandasuit(at)gmail(dot)com> wrote:

> The error is causes by me Asserting against the wrong variable. I

> never noticed this as I apparently did not have assertions turned on

> on my development machine. That is fixed now and with the new patch

> version I have attached all assertions are passing with your query and

> my test queries. I added another assertion to that section of the

> code so that it is a bit more vigorous in confirming the hash table

> partition is correct. It does not change the operation of the code.

>

> There are two partition counts. One holds the maximum number of

> buckets in the hash table and the other counts the number of actual

> buckets created for hash values. I was incorrectly testing against

> the second one because that was valid before I started using a hash

> table to store the buckets.

>

> The enable_hashjoin_usestatmcvs flag was valuable for my own research

> and tests and likely useful for your review but Tom is correct that it

> can be removed in the final version.

>

> - Bryce Cutt

Well, that builds nicely, lets me import the data, and I've seen a

performance improvement with enable_hashjoin_usestatmcvs on vs. off. I

plan to test that more formally (though probably not fully to the

extent you did in your paper; just enough to feel comfortable that I'm

getting similar results). Then I'll spend some time poking in the

code, for the relatively little good I feel I can do in that capacity,

and I'll also investigate scenarios with particularly inaccurate

statistics. Stay tuned.

- Josh

| From: | Simon Riggs <simon(at)2ndQuadrant(dot)com> |

|---|---|

| To: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

| Cc: | Bryce Cutt <pandasuit(at)gmail(dot)com>, Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-06 22:52:54 |

| Message-ID: | 1226011974.27904.25.camel@ebony.2ndQuadrant |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Thu, 2008-11-06 at 15:33 -0700, Joshua Tolley wrote:

> Stay tuned.

Minor question on this patch. AFAICS there is another patch that seems

to be aiming at exactly the same use case. Jonah's Bloom filter patch.

Shouldn't we have a dust off to see which one is best? Or at least a

discussion to test whether they overlap? Perhaps you already did that

and I missed it because I'm not very tuned in on this thread.

--

Simon Riggs www.2ndQuadrant.com

PostgreSQL Training, Services and Support

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Simon Riggs" <simon(at)2ndquadrant(dot)com> |

| Cc: | "Bryce Cutt" <pandasuit(at)gmail(dot)com>, "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org, jonah(dot)harris(at)gmail(dot)com |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-06 23:22:16 |

| Message-ID: | e7e0a2570811061522g63a06fa8o4f02972a607840eb@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Thu, Nov 6, 2008 at 3:52 PM, Simon Riggs <simon(at)2ndquadrant(dot)com> wrote:

>

> On Thu, 2008-11-06 at 15:33 -0700, Joshua Tolley wrote:

>

>> Stay tuned.

>

> Minor question on this patch. AFAICS there is another patch that seems

> to be aiming at exactly the same use case. Jonah's Bloom filter patch.

>

> Shouldn't we have a dust off to see which one is best? Or at least a

> discussion to test whether they overlap? Perhaps you already did that

> and I missed it because I'm not very tuned in on this thread.

>

> --

> Simon Riggs www.2ndQuadrant.com

> PostgreSQL Training, Services and Support

We haven't had that discussion AFAIK, and definitely should. First

glance suggests they could coexist peacefully, with proper coaxing. If

I understand things properly, Jonah's patch filters tuples early in

the join process, and this patch tries to ensure that hash join

batches are kept in RAM when they're most likely to be used. So

they're orthogonal in purpose, and the patches actually apply *almost*

cleanly together. Jonah, any comments? If I continue to have some time

to devote, and get through all I think I can do to review this patch,

I'll gladly look at Jonah's too, FWIW.

- Josh

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | "Joshua Tolley" <eggyknap(at)gmail(dot)com>, "Simon Riggs" <simon(at)2ndquadrant(dot)com> |

| Cc: | "Bryce Cutt" <pandasuit(at)gmail(dot)com>, "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, <pgsql-hackers(at)postgresql(dot)org>, <jonah(dot)harris(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-07 00:31:15 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C16D7@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

> -----Original Message-----

> > Minor question on this patch. AFAICS there is another patch that

seems

> > to be aiming at exactly the same use case. Jonah's Bloom filter

patch.

> >

> > Shouldn't we have a dust off to see which one is best? Or at least a

> > discussion to test whether they overlap? Perhaps you already did

that

> > and I missed it because I'm not very tuned in on this thread.

> >

> > --

> > Simon Riggs www.2ndQuadrant.com

> > PostgreSQL Training, Services and Support

>

> We haven't had that discussion AFAIK, and definitely should. First

> glance suggests they could coexist peacefully, with proper coaxing. If

> I understand things properly, Jonah's patch filters tuples early in

> the join process, and this patch tries to ensure that hash join

> batches are kept in RAM when they're most likely to be used. So

> they're orthogonal in purpose, and the patches actually apply *almost*

> cleanly together. Jonah, any comments? If I continue to have some time

> to devote, and get through all I think I can do to review this patch,

> I'll gladly look at Jonah's too, FWIW.

>

> - Josh

The skew patch and bloom filter patch are orthogonal and can both be

applied. The bloom filter patch is a great idea, and it is used in many

other database systems. You can use the TPC-H data set to demonstrate

that the bloom filter patch will significantly improve performance of

multi-batch joins (with or without data skew).

Any query that filters a build table before joining on the probe table

will show improvements with a bloom filter. For example,

select * from customer, orders where customer.c_nationkey = 10 and

customer.c_custkey = orders.o_custkey

The bloom filter on customer would allow us to avoid probing with orders

tuples that cannot possibly find a match due to the selection criteria.

This is especially beneficial for multi-batch joins where an orders

tuple must be written to disk if its corresponding customer batch is not

the in-memory batch.

I have no experience reviewing patches, but I would be happy to help

contribute/review the bloom filter patch as best I can.

--

Dr. Ramon Lawrence

Assistant Professor, Department of Computer Science, University of

British Columbia Okanagan

E-mail: ramon(dot)lawrence(at)ubc(dot)ca

| From: | "Joshua Tolley" <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | "Simon Riggs" <simon(at)2ndquadrant(dot)com>, "Bryce Cutt" <pandasuit(at)gmail(dot)com>, "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org, jonah(dot)harris(at)gmail(dot)com |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-07 00:44:56 |

| Message-ID: | e7e0a2570811061644v12ca8dd6ud76284ee8fa37d25@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Thu, Nov 6, 2008 at 5:31 PM, Lawrence, Ramon <ramon(dot)lawrence(at)ubc(dot)ca> wrote:

>> -----Original Message-----

>> > Minor question on this patch. AFAICS there is another patch that

> seems

>> > to be aiming at exactly the same use case. Jonah's Bloom filter

> patch.

>> >

>> > Shouldn't we have a dust off to see which one is best? Or at least a

>> > discussion to test whether they overlap? Perhaps you already did

> that

>> > and I missed it because I'm not very tuned in on this thread.

>> >

>> > --

>> > Simon Riggs www.2ndQuadrant.com

>> > PostgreSQL Training, Services and Support

>>

>> We haven't had that discussion AFAIK, and definitely should. First

>> glance suggests they could coexist peacefully, with proper coaxing. If

>> I understand things properly, Jonah's patch filters tuples early in

>> the join process, and this patch tries to ensure that hash join

>> batches are kept in RAM when they're most likely to be used. So

>> they're orthogonal in purpose, and the patches actually apply *almost*

>> cleanly together. Jonah, any comments? If I continue to have some time

>> to devote, and get through all I think I can do to review this patch,

>> I'll gladly look at Jonah's too, FWIW.

>>

>> - Josh

>

> The skew patch and bloom filter patch are orthogonal and can both be

> applied. The bloom filter patch is a great idea, and it is used in many

> other database systems. You can use the TPC-H data set to demonstrate

> that the bloom filter patch will significantly improve performance of

> multi-batch joins (with or without data skew).

>

> Any query that filters a build table before joining on the probe table

> will show improvements with a bloom filter. For example,

>

> select * from customer, orders where customer.c_nationkey = 10 and

> customer.c_custkey = orders.o_custkey

>

> The bloom filter on customer would allow us to avoid probing with orders

> tuples that cannot possibly find a match due to the selection criteria.

> This is especially beneficial for multi-batch joins where an orders

> tuple must be written to disk if its corresponding customer batch is not

> the in-memory batch.

>

> I have no experience reviewing patches, but I would be happy to help

> contribute/review the bloom filter patch as best I can.

>

> --

> Dr. Ramon Lawrence

> Assistant Professor, Department of Computer Science, University of

> British Columbia Okanagan

> E-mail: ramon(dot)lawrence(at)ubc(dot)ca

>

I've no patch review experience, either -- this is my first one. See

http://wiki.postgresql.org/wiki/Reviewing_a_Patch for details on what

a reviewer ought to do in general; various patch review discussions on

the -hackers list have also proven helpful. As regards this patch

specifically, it seems we could merge the two patches into one and

consider them together. However, the bloom filter patch is listed as a

"Work in Progress" on

http://wiki.postgresql.org/wiki/CommitFest_2008-11. Perhaps it needs

more work before being considered seriously? Jonah, what do you think

would be most helpful?

- Josh / eggyknap

| From: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | Bryce Cutt <pandasuit(at)gmail(dot)com> |

| Cc: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-10 08:57:57 |

| Message-ID: | 20081110085757.GB10915@uber |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

On Wed, Nov 05, 2008 at 04:06:11PM -0800, Bryce Cutt wrote:

> The error is causes by me Asserting against the wrong variable. I

> never noticed this as I apparently did not have assertions turned on

> on my development machine. That is fixed now and with the new patch

> version I have attached all assertions are passing with your query and

> my test queries. I added another assertion to that section of the

> code so that it is a bit more vigorous in confirming the hash table

> partition is correct. It does not change the operation of the code.

>

> There are two partition counts. One holds the maximum number of

> buckets in the hash table and the other counts the number of actual

> buckets created for hash values. I was incorrectly testing against

> the second one because that was valid before I started using a hash

> table to store the buckets.

>

> The enable_hashjoin_usestatmcvs flag was valuable for my own research

> and tests and likely useful for your review but Tom is correct that it

> can be removed in the final version.

>

> - Bryce Cutt

>

Well, this version seems to work as advertised. Skewed data sets tend to

hash join more quickly with this turned on, and data sets with

deliberately bad statistics don't perform much differently than with the

feature turned off. The patch applies cleanly to CVS HEAD.

I don't consider myself qualified to do a decent code review. However I

noticed that the comments are all done with // instead of /* ... */.

That should probably be changed.

To those familiar with code review: is there more I should do to review

this?

- Josh / eggyknap

| From: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-21 00:44:06 |

| Message-ID: | 22901.1227228246@sss.pgh.pa.us |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

"Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> writes:

> We propose a patch that improves hybrid hash join's performance for

> large multi-batch joins where the probe relation has skew.

> ...

> The basic idea

> is to keep build relation tuples in a small in-memory hash table that

> have join values that are frequently occurring in the probe relation.

I looked at this patch a little.

I'm a tad worried about what happens when the values that are frequently

occurring in the outer relation are also frequently occurring in the

inner (which hardly seems an improbable case). Don't you stand a severe

risk of blowing out the in-memory hash table? It doesn't appear to me

that the code has any way to back off once it's decided that a certain

set of join key values are to be treated in-memory. Splitting the main

join into more batches certainly doesn't help with that.

Also, AFAICS the benefit of this patch comes entirely from avoiding dump

and reload of tuples bearing the most common values, which means it's a

significant waste of cycles when there's only one batch. It'd be better

to avoid doing any of the extra work in the single-batch case.

One thought that might address that point as well as the difficulty of

getting stats in nontrivial cases is to wait until we've overrun memory

and are forced to start batching, and at that point determine on-the-fly

which are the most common hash values from inspection of the hash table

as we dump it out. This would amount to optimizing on the basis of

frequency in the *inner* relation not the outer, but offhand I don't see

any strong theoretical basis why that wouldn't be just as good. It

could lose if the first work_mem worth of inner tuples isn't

representative of what follows; but this hardly seems more dangerous

than depending on MCV stats that are for the whole outer relation rather

than the portion of it being selected.

regards, tom lane

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

| Cc: | <pgsql-hackers(at)postgresql(dot)org>, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-11-24 16:16:57 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C1751@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

> -----Original Message-----

> From: Tom Lane [mailto:tgl(at)sss(dot)pgh(dot)pa(dot)us]

> I'm a tad worried about what happens when the values that are

frequently

> occurring in the outer relation are also frequently occurring in the

> inner (which hardly seems an improbable case). Don't you stand a

severe

> risk of blowing out the in-memory hash table? It doesn't appear to me

> that the code has any way to back off once it's decided that a certain

> set of join key values are to be treated in-memory. Splitting the

main

> join into more batches certainly doesn't help with that.

>

> Also, AFAICS the benefit of this patch comes entirely from avoiding

dump

> and reload of tuples bearing the most common values, which means it's

a

> significant waste of cycles when there's only one batch. It'd be

better

> to avoid doing any of the extra work in the single-batch case.

>

> One thought that might address that point as well as the difficulty of

> getting stats in nontrivial cases is to wait until we've overrun

memory

> and are forced to start batching, and at that point determine

on-the-fly

> which are the most common hash values from inspection of the hash

table

> as we dump it out. This would amount to optimizing on the basis of

> frequency in the *inner* relation not the outer, but offhand I don't

see

> any strong theoretical basis why that wouldn't be just as good. It

> could lose if the first work_mem worth of inner tuples isn't

> representative of what follows; but this hardly seems more dangerous

> than depending on MCV stats that are for the whole outer relation

rather

> than the portion of it being selected.

>

> regards, tom lane

You are correct with both observations. The patch only has a benefit

when there is more than one batch. Also, there is a potential issue

with MCV hash table overflows if the number of tuples that match the

MCVs in the build relation is very large.

Bryce has created a patch (attached) that disables the code for one

batch joins. This patch also checks for MCV hash table overflows and

handles them by "flushing" from the MCV hash table back to the main hash

table. The main hash table will then resolve overflows as usual. Note

that this will cause the worse case of a build table with all the same

values to be handled the same as the current hash code, i.e., it will

attempt to re-partition until it eventually gives up and then allocates

the entire partition in memory. There may be a better way to handle

this case, but the new patch will remain consistent with the current

hash join implementation.

The issue with determining and using the MCV stats is more challenging

than it appears. First, knowing the MCVs of the build table will not

help us. What we need are the MCVs of the probe table because by

knowing those values we will keep the tuples with those values in the

build relation in memory. For example, consider a join between tables

Part and LineItem. Assume 1 popular part accounts for 10% of all

LineItems. If Part is the build relation and LineItem is the probe

relation, then by keeping that 1 part record in memory, we will

guarantee that we do not need to write out 10% of LineItem. If a

selection occurs on LineItem before the join, it may change the

distribution of LineItem (the MCVs) but it is probable that they are

still a good estimate of the MCVs in the derived LineItem relation. (We

did experiments on trying to sample the first few thousand tuples of the

probe relation to dynamically determine the MCVs but generally found

this was inaccurate due to non-random samples.) In essence, the goal is

to smartly pick the tuples that remain in the in-memory batch before

probing begins. Since the number of MCVs is small, incorrectly

selecting build tuples to remain in memory has negligible cost.

If we assume that LineItem has been filtered so much that it is now

smaller than Part and is the build relation then the MCV approach does

not apply. There is no skew in Part on partkey (since it is the PK) and

knowing the MCV partkeys in LineItem does not help us because they each

only join with a single tuple in Part. In this case, the MCV approach

should not be used because no benefit is possible, and it will not be

used because there will be no MCVs for Part.partkey.

The bad case with MCV hash table overflow requires a many-to-many join

between the two relations which would not occur on the more typical

PK-FK joins.

--

Dr. Ramon Lawrence

Assistant Professor, Department of Computer Science, University of

British Columbia Okanagan

E-mail: ramon(dot)lawrence(at)ubc(dot)ca

| Attachment | Content-Type | Size |

|---|---|---|

| histojoin_v3.patch | application/octet-stream | 21.0 KB |

| From: | "Robert Haas" <robertmhaas(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-12-16 04:51:38 |

| Message-ID: | 603c8f070812152051j5510a229j6f268526677ed906@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

I have to admit that I haven't fully grokked what this patch is about

just yet, so what follows is mostly a coding style review at this

point. It would help a lot if you could add some comments to the new

functions that are being added to explain the purpose of each at a

very high level. There's clearly been a lot of thought put into some

parts of this logic, so it would be worth explaining the reasoning

behind that logic.

This patch applies clearly against CVS HEAD, but does not compile

(please fix the warning, too).

nodeHash.c:88: warning: no previous prototype for 'freezeNextMCVPartiton'

nodeHash.c: In function 'freezeNextMCVPartiton':

nodeHash.c:148: error: 'struct HashJoinTableData' has no member named 'inTupIOs'

I commented out the offending line. It errored out again here:

nodeHashjoin.c: In function 'getMostCommonValues':

nodeHashjoin.c:136: error: wrong type argument to unary plus

After removing the stray + sign, it compiled, but failed the

"rangefuncs" regression test. If you're going to keep the

enable_hashjoin_usestatmvcs() GUC around, you need to patch

rangefuncs.out so that the regression tests pass. I think, however,

that there was some discussion of removing that before the patch is

committed; if so, please do that instead. Keeping the GUC would also

require patching the documentation, which the current patch does not

do.

getMostCommonValues() isn't a good name for a non-static function

because there's nothing to tip the reader off to the fact that it has

something to do with hash joins; compare with the other function names

defined in the same header file. On the flip side, that function has

only one call site, so it should probably be made static and not

declared in the header file at all. Some of the other new functions

need similar treatment. I am also a little suspicious of this bit of

code:

relid = getrelid(((SeqScan *) ((SeqScanState *)

outerPlanState(hjstate))->ps.plan)->scanrelid,

estate->es_range_table);

clause = (FuncExprState *) lfirst(list_head(hjstate->hashclauses));

argstate = (ExprState *) lfirst(list_head(clause->args));

variable = (Var *) argstate->expr;

I'm not very familiar with the hash join code, but it seems like there

are a lot of assumptions being made there about what things are

pointing to what other things. Is this this actually safe? And if it

is, perhaps a comment explaining why?

getMostCommonValues() also appears to be creating and maintaining a

counter called collisionsWhileHashing, but nothing is ever done with

the counter. On a similar note, the variables relattnum, atttype, and

atttypmod don't appear to be necessary; 2 out of 3 of them are only

used once, so maybe inlining the reference and dropping the variable

would make more sense. Also, the if (HeapTupleIsValid(statsTuple))

block encompasses the whole rest of the function, maybe if

(!HeapTupleIsValid(statsTuple)) return?

I don't understand why

hashtable->mostCommonTuplePartition[bucket].tuples and .frozen need to

be initialized to 0. It looks to me like those are in a zero-filled

array that was just allocated, so it shouldn't be necessary to re-zero

them, unless I'm missing something.

freezeNextMCVPartiton is mis-spelled consistently throughout (the last

three letters should be "ion"). I also don't think it makes sense to

enclose everything but the first two lines of that function in an

else-block.

There is some initialization code in ExecHashJoin() that looks like it

belongs in ExecHashTableCreate.

It appears to me that the interface to isAMostCommonValue() could be

simplified by just making it return the partition number. It could

perhaps be renamed something like ExecHashGetMCVPartition().

Does ExecHashTableDestroy() need to explicitly pfree

hashtable->mostCommonTuplePartition and

hashtable->flushOrderedMostCommonTuplePartition? I would think those

would be allocated out of hashCxt - if they aren't, they probably

should be.

Department of minor nitpicks: (1) C++-style comments are not

permitted, (2) function names need to be capitalized like_this() or

LikeThis() but not likeThis(), (3) when defining a function, the

return type should be placed on the line preceding the actual function

name, so that the function name is at the beginning of the line, (4)

curly braces should be avoided around a block containing only one

statement, (5) excessive blank lines should be avoided (for example,

the one in costsize.c is clearly unnecessary, and there's at least one

place where you add two consecutive blank lines), and (6) I believe

the accepted way to write an empty loop is an indented semi-colon on

the next line, rather than {} on the same line as the while.

I will try to do some more substantive testing of this as well.

...Robert

| From: | "Robert Haas" <robertmhaas(at)gmail(dot)com> |

|---|---|

| To: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

| Cc: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-12-18 03:53:36 |

| Message-ID: | 603c8f070812171953q3f220ed2keca9e6a62694eb62@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

Dr. Lawrence:

I'm still working on reviewing this patch. I've managed to load the

sample TPCH data from tpch1g1z.zip after changing the line endings to

UNIX-style and chopping off the trailing vertical bars. (If anyone is

interested, I have the results of pg_dump | bzip2 -9 on the resulting

database, which I would be happy to upload if someone has server

space. It is about 250MB.)

But, I'm not sure quite what to do in terms of generating queries.

TPCHSkew contains QGEN.EXE, but that seems to require that you provide

template queries as input, and I'm not sure where to get the

templates.

Any suggestions?

Thanks,

...Robert

| From: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca> |

|---|---|

| To: | "Robert Haas" <robertmhaas(at)gmail(dot)com> |

| Cc: | "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, <pgsql-hackers(at)postgresql(dot)org>, "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-12-18 05:39:16 |

| Message-ID: | 6EEA43D22289484890D119821101B1DF2C180E@exchange20.mercury.ad.ubc.ca |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

Robert,

You do not need to use qgen.exe to generate queries as you are not

running the TPC-H benchmark test. Attached is an example of the 22

sample TPC-H queries according to the benchmark.

We have not tested using the TPC-H queries for this particular patch and

only use the TPC-H database as a large, skewed data set. The simpler

queries we test involve joins of Part-Lineitem or Supplier-Lineitem such

as:

Select * from part, lineitem where p_partkey = l_partkey

OR

Select count(*) from part, lineitem where p_partkey = l_partkey

The count(*) version is usually more useful for comparisons as the

generation of output tuples on the client side (say with pgadmin)

dominates the actual time to complete the query.

To isolate query costs, we also test using a simple server-side

function. The setup description I have also attached.

I would be happy to help in any way I can.

Bryce is currently working on an updated patch according to your

suggestions.

--

Dr. Ramon Lawrence

Assistant Professor, Department of Computer Science, University of

British Columbia Okanagan

E-mail: ramon(dot)lawrence(at)ubc(dot)ca

> -----Original Message-----

> From: pgsql-hackers-owner(at)postgresql(dot)org [mailto:pgsql-hackers-

> owner(at)postgresql(dot)org] On Behalf Of Robert Haas

> Sent: December 17, 2008 7:54 PM

> To: Lawrence, Ramon

> Cc: Tom Lane; pgsql-hackers(at)postgresql(dot)org; Bryce Cutt

> Subject: Re: [HACKERS] Proposed Patch to Improve Performance of Multi-

> Batch Hash Join for Skewed Data Sets

>

> Dr. Lawrence:

>

> I'm still working on reviewing this patch. I've managed to load the

> sample TPCH data from tpch1g1z.zip after changing the line endings to

> UNIX-style and chopping off the trailing vertical bars. (If anyone is

> interested, I have the results of pg_dump | bzip2 -9 on the resulting

> database, which I would be happy to upload if someone has server

> space. It is about 250MB.)

>

> But, I'm not sure quite what to do in terms of generating queries.

> TPCHSkew contains QGEN.EXE, but that seems to require that you provide

> template queries as input, and I'm not sure where to get the

> templates.

>

> Any suggestions?

>

> Thanks,

>

> ...Robert

>

> --

> Sent via pgsql-hackers mailing list (pgsql-hackers(at)postgresql(dot)org)

> To make changes to your subscription:

> http://www.postgresql.org/mailpref/pgsql-hackers

| Attachment | Content-Type | Size |

|---|---|---|

| test_queries.txt | text/plain | 13.1 KB |

| setup.txt | text/plain | 1.8 KB |

| From: | "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

|---|---|

| To: | "Robert Haas" <robertmhaas(at)gmail(dot)com> |

| Cc: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-12-20 10:58:37 |

| Message-ID: | 1924d1180812200258v2cf4dcdfn5aeee21bb4e3ce0d@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

Robert,

I thoroughly appreciate the constructive criticism.

The compile errors are due to my development process being convoluted.

I will endeavor to not waste your time in the future with errors

caused by my development process.

I have updated the code to follow the conventions and suggestions

given. I am now working on adding the requested documentation. I

will not submit the next patch until that is done. The functionality

has not changed so you can performance test with the patch you have.

As for that particularly ugly piece of code. I figured that out while

digging through the selfuncs code. Basically I needed a way to get

the stats tuple for the outer relation join column of the join but to

do that I needed to figure out how to get the actual relation id and

attribute number that was being joined.

I have not yet figured out a better way to do this but I am sure there

is someone on the mailing list with far more knowledge of this than I

have.

I could possibly be more vigorous in testing to make sure the things I

am casting are exactly what I expect. My tests have always been

consistent so far.

I am essentially doing what is done in selfuncs. I believe I could

use the examine_variable() function in selfuncs.c except I would first

need a PlannerInfo and I don't think I can get that from inside the

join initialization code.

- Bryce Cutt

On Mon, Dec 15, 2008 at 8:51 PM, Robert Haas <robertmhaas(at)gmail(dot)com> wrote:

> I have to admit that I haven't fully grokked what this patch is about

> just yet, so what follows is mostly a coding style review at this

> point. It would help a lot if you could add some comments to the new

> functions that are being added to explain the purpose of each at a

> very high level. There's clearly been a lot of thought put into some

> parts of this logic, so it would be worth explaining the reasoning

> behind that logic.

>

> This patch applies clearly against CVS HEAD, but does not compile

> (please fix the warning, too).

>

> nodeHash.c:88: warning: no previous prototype for 'freezeNextMCVPartiton'

> nodeHash.c: In function 'freezeNextMCVPartiton':

> nodeHash.c:148: error: 'struct HashJoinTableData' has no member named 'inTupIOs'

>

> I commented out the offending line. It errored out again here:

>

> nodeHashjoin.c: In function 'getMostCommonValues':

> nodeHashjoin.c:136: error: wrong type argument to unary plus

>

> After removing the stray + sign, it compiled, but failed the

> "rangefuncs" regression test. If you're going to keep the

> enable_hashjoin_usestatmvcs() GUC around, you need to patch

> rangefuncs.out so that the regression tests pass. I think, however,

> that there was some discussion of removing that before the patch is

> committed; if so, please do that instead. Keeping the GUC would also

> require patching the documentation, which the current patch does not

> do.

>

> getMostCommonValues() isn't a good name for a non-static function

> because there's nothing to tip the reader off to the fact that it has

> something to do with hash joins; compare with the other function names

> defined in the same header file. On the flip side, that function has

> only one call site, so it should probably be made static and not

> declared in the header file at all. Some of the other new functions

> need similar treatment. I am also a little suspicious of this bit of

> code:

>

> relid = getrelid(((SeqScan *) ((SeqScanState *)

> outerPlanState(hjstate))->ps.plan)->scanrelid,

> estate->es_range_table);

> clause = (FuncExprState *) lfirst(list_head(hjstate->hashclauses));

> argstate = (ExprState *) lfirst(list_head(clause->args));

> variable = (Var *) argstate->expr;

>

> I'm not very familiar with the hash join code, but it seems like there

> are a lot of assumptions being made there about what things are

> pointing to what other things. Is this this actually safe? And if it

> is, perhaps a comment explaining why?

>

> getMostCommonValues() also appears to be creating and maintaining a

> counter called collisionsWhileHashing, but nothing is ever done with

> the counter. On a similar note, the variables relattnum, atttype, and

> atttypmod don't appear to be necessary; 2 out of 3 of them are only

> used once, so maybe inlining the reference and dropping the variable

> would make more sense. Also, the if (HeapTupleIsValid(statsTuple))

> block encompasses the whole rest of the function, maybe if

> (!HeapTupleIsValid(statsTuple)) return?

>

> I don't understand why

> hashtable->mostCommonTuplePartition[bucket].tuples and .frozen need to

> be initialized to 0. It looks to me like those are in a zero-filled

> array that was just allocated, so it shouldn't be necessary to re-zero

> them, unless I'm missing something.

>

> freezeNextMCVPartiton is mis-spelled consistently throughout (the last

> three letters should be "ion"). I also don't think it makes sense to

> enclose everything but the first two lines of that function in an

> else-block.

>

> There is some initialization code in ExecHashJoin() that looks like it

> belongs in ExecHashTableCreate.

>

> It appears to me that the interface to isAMostCommonValue() could be

> simplified by just making it return the partition number. It could

> perhaps be renamed something like ExecHashGetMCVPartition().

>

> Does ExecHashTableDestroy() need to explicitly pfree

> hashtable->mostCommonTuplePartition and

> hashtable->flushOrderedMostCommonTuplePartition? I would think those

> would be allocated out of hashCxt - if they aren't, they probably

> should be.

>

> Department of minor nitpicks: (1) C++-style comments are not

> permitted, (2) function names need to be capitalized like_this() or

> LikeThis() but not likeThis(), (3) when defining a function, the

> return type should be placed on the line preceding the actual function

> name, so that the function name is at the beginning of the line, (4)

> curly braces should be avoided around a block containing only one

> statement, (5) excessive blank lines should be avoided (for example,

> the one in costsize.c is clearly unnecessary, and there's at least one

> place where you add two consecutive blank lines), and (6) I believe

> the accepted way to write an empty loop is an indented semi-colon on

> the next line, rather than {} on the same line as the while.

>

> I will try to do some more substantive testing of this as well.

>

> ...Robert

>

| From: | "Robert Haas" <robertmhaas(at)gmail(dot)com> |

|---|---|

| To: | "Bryce Cutt" <pandasuit(at)gmail(dot)com> |

| Cc: | "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, "Tom Lane" <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org |

| Subject: | Re: Proposed Patch to Improve Performance of Multi-Batch Hash Join for Skewed Data Sets |

| Date: | 2008-12-22 03:25:59 |

| Message-ID: | 603c8f070812211925hdb4db79o3aeed87c601199a3@mail.gmail.com |

| Views: | Raw Message | Whole Thread | Download mbox | Resend email |

| Lists: | pgsql-hackers |

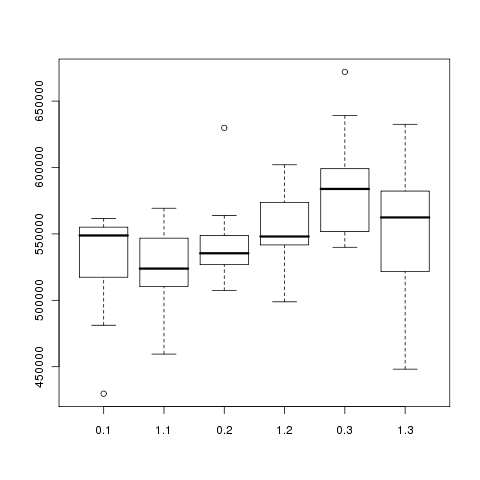

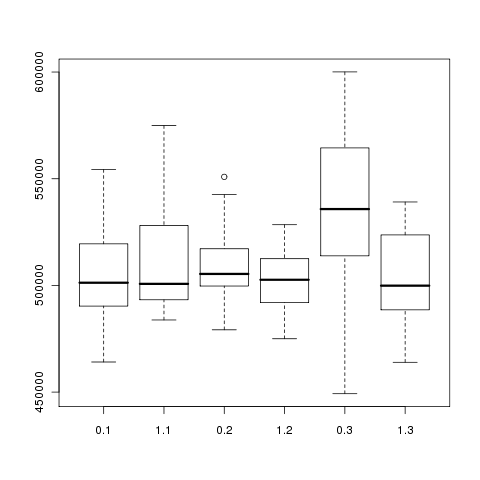

[Some performance testing.]

I ran this query 10x with this patch applied, and then 10x again with

enable_hashjoin_usestatmvcs set to false to disable the optimization:

select sum(1) from (select * from part, lineitem where p_partkey = l_partkey) x;

With the optimization enabled, the query took between 26.6 and 38.3

seconds with an average of 31.6. With the optimization disabled, the

query took between 48.3 and 69.0 seconds with an average of 60.0

seconds.

It appears that the 100 entries in pg_statistic cover about 32% of l_partkey:

tpch=# WITH x AS (

SELECT stanumbers1, array_length(stanumbers1, 1) AS len

FROM pg_statistic WHERE starelid='lineitem'::regclass

AND staattnum = (SELECT attnum FROM pg_attribute

WHERE attrelid='lineitem'::regclass AND

attname='l_partkey')

)

SELECT sum(x.stanumbers1[y.g]) FROM x,

(select generate_series(1, x.len) g from x) y;

sum

--------

0.3276

(1 row)

(there's probably a better way to write that query...)

stadistinct for l_partkey is 23,050; the actual number of distinct

values is 199,919. IOW, 0.0005% of the distinct values account for

32.76% of the table. That's a lot of skew, but not unrealistic - I've

seen tables where more than half of the rows were covered by a single

value.

...Robert

| From: | Joshua Tolley <eggyknap(at)gmail(dot)com> |

|---|---|

| To: | Robert Haas <robertmhaas(at)gmail(dot)com> |

| Cc: | Bryce Cutt <pandasuit(at)gmail(dot)com>, "Lawrence, Ramon" <ramon(dot)lawrence(at)ubc(dot)ca>, Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us>, pgsql-hackers(at)postgresql(dot)org |